Phase 4 and real-world evidence are not synonyms for post-approval research. Phase 4 is a specific regulatory milestone: an interventional clinical trial that follows drug approval, often required as a condition of that approval. Real-world evidence spans the entire drug development lifecycle, from natural history studies running before Phase 1 to long-term safety and effectiveness programs active years after a product reaches the market. This piece covers the definitional line between the two, the main types of RWE study and what each is built to answer, where the two approaches genuinely converge, how their infrastructure requirements differ, and how to think about both as part of a coordinated post-approval evidence strategy.

Post-approval, sponsors often manage two distinct evidence streams simultaneously. The Phase 4 program fulfills regulatory commitments made at the time of approval. The real-world evidence program builds the effectiveness and safety story for payers, medical affairs, and long-term label development. Both can be required by regulators. Both generate data that agencies and payers review in their assessments. What separates them is the question each is built to answer and the methodological logic that question demands.

That distinction matters commercially and scientifically. A payer reviewing coverage decisions for a specific patient population needs effectiveness data from real clinical practice, not a controlled trial designed to satisfy a regulatory commitment. A regulator reviewing a post-marketing commitment needs the interventional evidence that commitment specified, not observational data collected under routine care. Getting the right evidence to the right stakeholder requires treating these as separate programs from the start.

This piece covers the definitional line between Phase 4 and real-world evidence, maps the main RWE study types and what each is designed to answer, identifies where the two approaches genuinely connect, and frames how to use both as part of a coordinated post-approval evidence strategy.

The word that derails most conversations: “trial”

There is a reason RWE practitioners react when someone uses the word “trial” in a meeting about observational studies. It signals a category error that runs deeper than terminology. Phase 4 is a clinical trial. An RWE study is not.

Phase 4 comes after approval, but it retains the defining characteristics of the trial: a prospective protocol, a schedule of events with specific visit windows, and often randomized or protocol-assigned treatment. The FDA or EMA may mandate it as a Post-Marketing RequirementA study or clinical trial required by FDA or EMA as a condition of drug approval, typically to confirm clinical benefit or address a safety signal identified before approval. to verify benefit or address a safety signal identified before approval.[1] For drugs approved through accelerated pathways, failure to complete confirmatory post-marketing studies can trigger regulatory proceedings that may ultimately lead to withdrawal of marketing authorization.[1]

A real-world evidence study follows patients as they are naturally seen in clinical practice, under standard of care. The study does not introduce a treatment as part of the study design. That is the definitional boundary between a clinical trial and an observational study under international GCP standards.[2] The moment you assign a patient to a treatment as part of the study protocol, you have crossed into trial territory. One important nuance: pragmatic clinical trials often look observational in practice, because they allow flexibility in how care is delivered and may draw on routine data. They are still interventional by design, because treatment assignment is part of the protocol.

Two questions, two designs

The clearest way to distinguish Phase 4 from RWE studies is through the question each is built to answer.

Phase 4 asks about efficacy and safety: does this drug work under controlled conditions, in a defined population, measured against a protocol-prescribed endpoint, and what safety signals emerge under those conditions?[3] Participants in a Phase 4 study know they are in a study. Their visits, labs, and assessments are scheduled and tracked according to a rigid protocol. Every data point was planned for in advance.

An RWE study asks about effectiveness and tolerability: how does this drug actually perform when patients are seen as they would normally be seen, without study-imposed visits or procedures, and how well do they tolerate it over time in real clinical practice?[3] The difference between those two questions runs through every design decision that follows.

Four dimensions separate the typical Phase 4 study from the typical RWE study:

| Dimension |

Phase 4 |

RWE study |

| Primary question |

Efficacy |

Effectiveness |

| Safety characterization |

Safety under controlled conditions |

Tolerability in real-world clinical practice |

| Patient population |

Homogeneous (protocol-defined eligibility criteria) |

Heterogeneous (broad clinical practice, fewer exclusions) |

| Study design |

Controlled (interventional) |

Observational |

This distinction also matters commercially. A drug can clear every Phase 4 commitment and still face skepticism from payers who want to know what the outcomes look like in the actual patient population they cover. That question can only be answered with real-world evidence.

A practical test: Ask whether the study introduces a medical intervention as part of the protocol. If yes, it is a clinical trial regardless of where it sits in the development timeline. If no, and patients are observed under standard of care, it is an observational study.

What RWE studies actually look like

Knowing what RWE is not — a clinical trial — only gets you so far. The more useful question is what it actually is in practice. Real-world evidence is not a single study type. The programs that fall under that umbrella differ significantly in design, regulatory standing, and what they can credibly demonstrate. Understanding those differences is what turns “we should run some RWE” from a vague intention into a fundable, stakeholder-specific program.

Post-Approval Safety Studies (PASS) are among the most common. Mandated by EMA under its formal PASS framework and required by FDA under its Post-Marketing Requirements structure, these studies collect long-term safety data on approved drugs in broader populations than were studied in clinical trials.[1][4] Pregnancy registries are a well-known example: women of childbearing potential are enrolled to track fetal exposure outcomes over time, in patients receiving an approved medication as part of their normal care. The data is observational and uncontrolled by design, and that is precisely what makes it informative for long-term safety surveillance in real patient populations.

Post-Authorization Effectiveness Studies (PAES) are observational studies required or recommended by EMA after approval to characterize how a medicine performs under real-world conditions. Where PASS addresses safety, PAES addresses effectiveness: how does the drug actually perform across the broader patient population that receives it outside a trial protocol? PAES data directly bridges the gap between efficacy measured in controlled trials and effectiveness in clinical practice, and it is increasingly cited as part of the market access dossier.[4]

Natural history studies document the course of a disease without any intervention. They can be prospective, enrolling participants and following them forward in time, or retrospective, drawing on data already captured in existing medical records. In rare disease drug development, natural history studies often run before or alongside Phase 1 and 2 clinical trials. They answer a question no randomized trial can: what happens to patients with this condition if you do not intervene? That data informs endpoint selection and helps sponsors identify outcomes that are both measurable and meaningful to patients. In some cases, it supports the development of novel endpoints grounded in patient experience, which is relevant to FDA’s patient-focused drug development program.[5][6]

Health Economics and Outcomes Research (HEOR) studies use real-world data to examine the economic and clinical value of a treatment in clinical practice. They capture outcomes including costs, resource utilization, quality of life, and productivity, in patient populations that reflect routine care rather than trial eligibility criteria. Payers increasingly require HEOR evidence as part of the reimbursement and formulary review process, making it an integral part of the evidence generation strategy for most launched products.

External Control Arms (ECAs) use patient data from outside the study as a comparator group in lieu of a concurrent randomized control. The data may come from electronic health records, registries, or prior clinical studies. FDA’s 2023 draft guidance on externally controlled trials addresses this approach and outlines conditions under which it may be appropriate when a concurrent randomized control arm is not feasible.[7]

| Study type |

Design |

Primary question |

Typical use |

| Phase 4 clinical trial |

Interventional, prospective protocol |

Does it work (efficacy) under controlled conditions? |

Confirmatory PMR, label expansion |

| PASS / Post-marketing safety study |

Observational, prospective or retrospective |

Is it safe in the real-world patient population? |

Safety surveillance, regulatory commitment |

| PAES / Post-authorization effectiveness study |

Observational, prospective or retrospective |

Is it effective in real-world clinical practice? |

Effectiveness evidence, market access support |

| Natural history study |

Observational, longitudinal (prospective or retrospective) |

What happens to patients without intervention? |

Endpoint development, rare disease, pre-trial planning |

| HEOR study |

Observational, typically retrospective |

What is the economic and outcomes value in clinical practice? |

Reimbursement dossiers, formulary decisions, market access |

| Externally controlled trial |

Single-arm trial with external comparator |

Does it work vs. real-world comparator patients? |

Rare disease, small populations, when randomization is not feasible |

Where Phase 4 and RWE genuinely converge

Most of those study types sit clearly on one side of the interventional/observational line. There is a small category of design approaches where Phase 4 methodology and real-world data genuinely meet. These are deliberate, well-defined choices that draw on real-world data to address specific constraints, not evidence that the distinction between trials and observation has blurred.

Synthetic Control Arms represent the clearest convergence point. A Synthetic Control Arm takes the External Control Arm concept further. Where an ECA draws directly from a real-world patient cohort to create a historical comparator, a Synthetic Control Arm uses advanced statistical methods to construct a comparator group from real-world patient-level data, creating what is sometimes described as a digital twin of the treated population. The comparator is not a group of actual patients who received standard of care alongside the treatment group. It is statistically derived from patient-level real-world data to approximate what that group would have looked like. FDA maintains significant methodological scrutiny over these approaches, and they are appropriate in specific, well-defined circumstances. The relevant scenarios span multiple phases of development. In Phase II proof-of-concept work, a synthetic control arm can generate early efficacy signals in rare or pediatric populations without exposing a control group to an investigational agent when early safety data is still limited. In Phase III, the clearest cases involve terminal illness, rare disease, or pediatric settings where the ethical or practical barriers to randomization are high and a real-world comparator can credibly substitute for a concurrent control arm. In Phase IV, synthetic controls appear most often in indication expansion programs, long-term safety assessments, and comparative effectiveness work, where sponsors need to generate evidence on new populations or endpoints without running a new full-scale controlled trial. They are not a general alternative to randomization, and FDA’s 2023 draft guidance is explicit about the methodological standards required for this evidence to be accepted.[7]

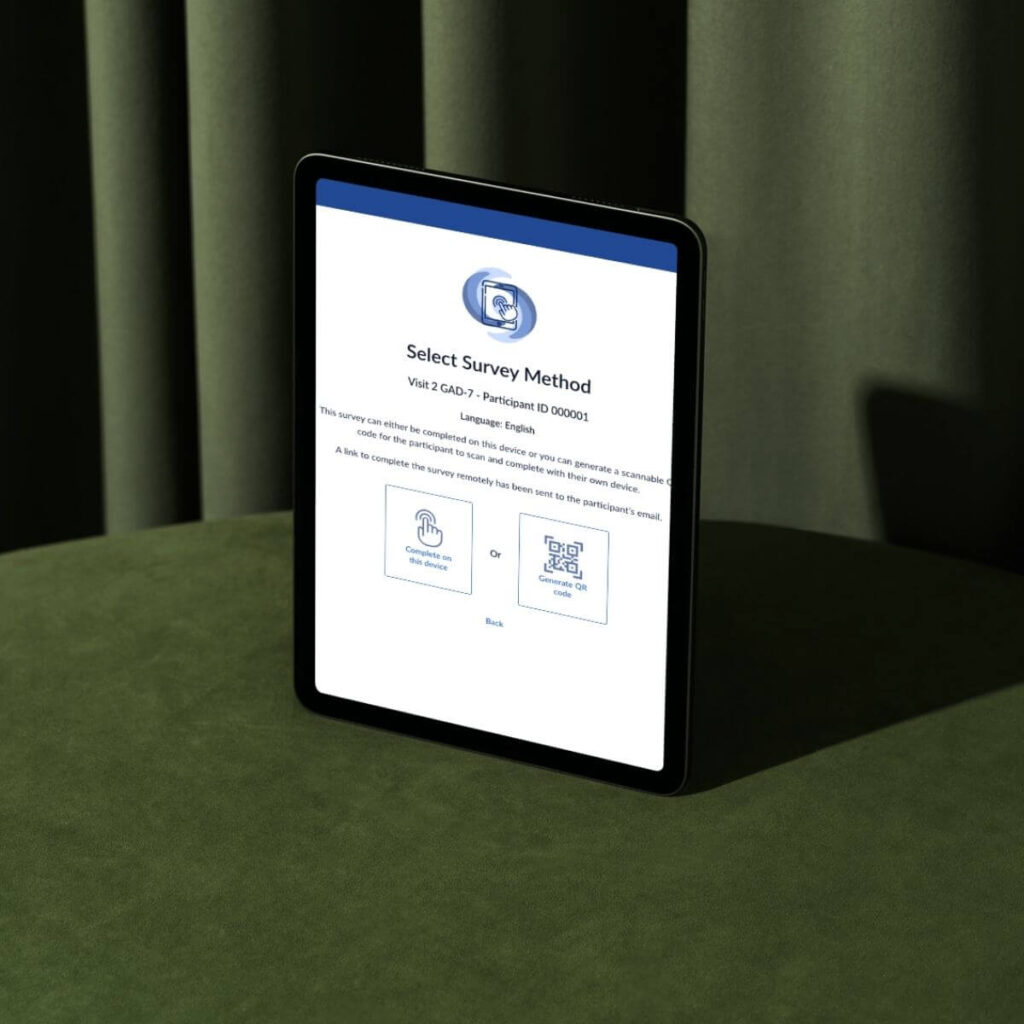

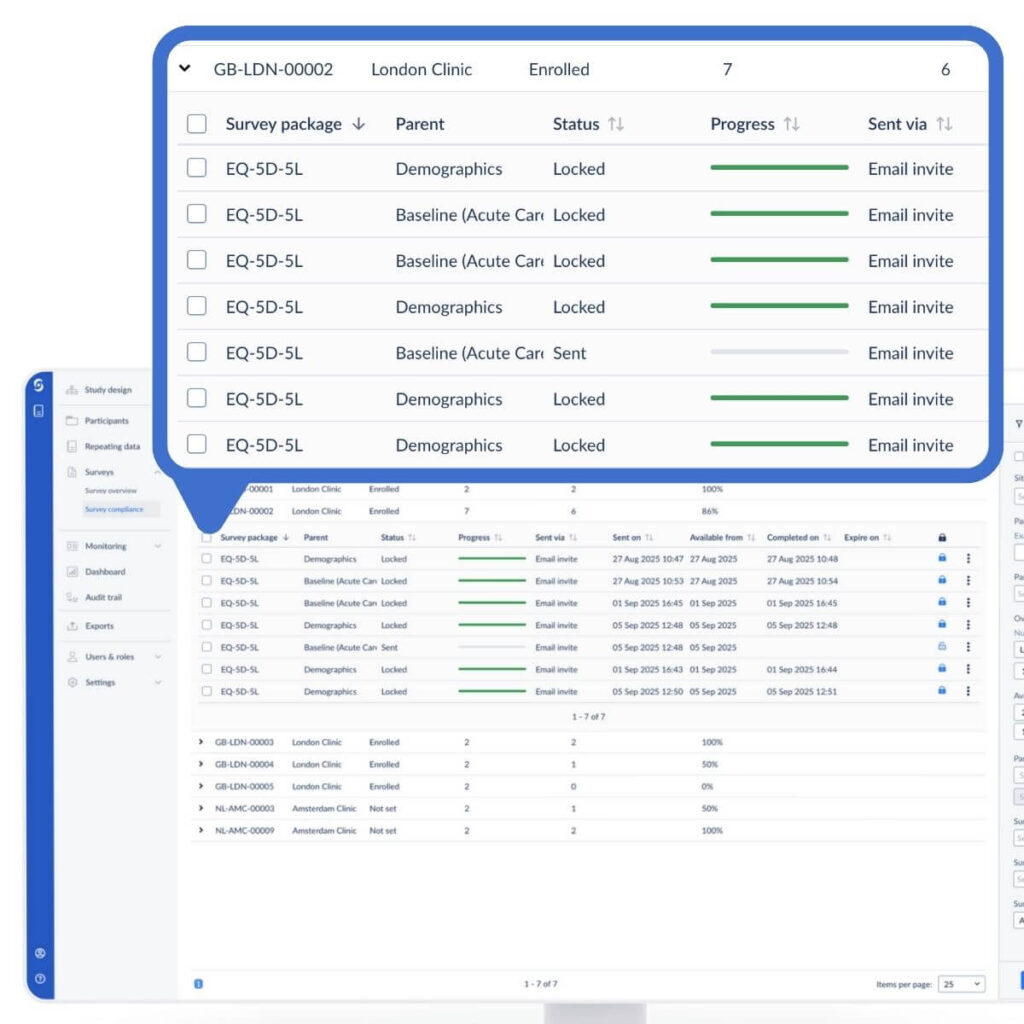

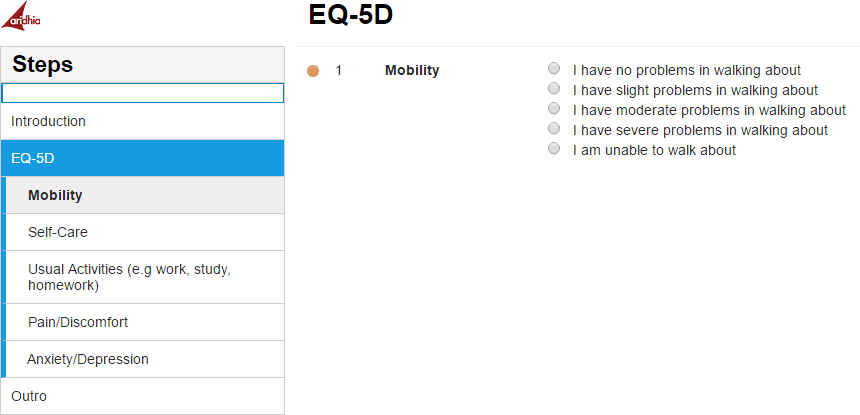

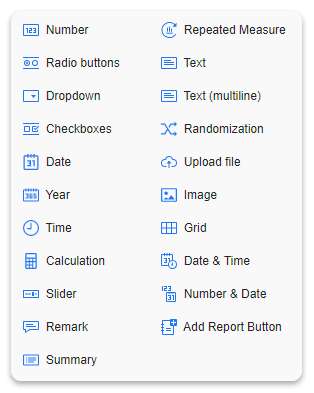

Technology: same appearance, different requirements

Phase 4 and RWE studies make fundamentally different demands on data infrastructure. Both rely on electronic data capture systems and both are moving toward more direct engagement with patients through ePRO and eCOA solutions. But what each program needs from those systems reflects the underlying difference between a controlled trial and an observational study.

Phase 4 needs protocol enforcement. The system has to support a rigid schedule of events, flag missed or out-of-window visits, and maintain the audit trail and data integrity requirements of GCP. eSource integration, where EHR data flows directly into the trial database, is supported by FDA guidance and increasingly used to reduce manual transcription and accelerate data collection, though adoption remains uneven across sites and regions.[8]

RWE studies need flexibility. Patients in an observational study do not follow a schedule prescribed by the study. They see their doctor when they see their doctor, and the data system has to accommodate natural variance in visit timing, unscheduled encounters, and, in retrospective studies, data entry from existing medical records. An EDC built for Phase 3 protocol rigidity will create friction for teams running a PASS or a registry study, because the study is designed around how patients actually live, not around a visit window.

Federated data networks represent a different infrastructure model built specifically for RWE data collection. In a federated network, patient data never leaves the institution that holds it. Queries go out to partner sites, analysis runs locally at each site, and only aggregate results return to the coordinating center. No patient-level data is transferred or pooled centrally. FDA’s Sentinel System is the clearest regulatory example at scale: it has operated as a full active surveillance network since 2016, spanning dozens of data partners covering hundreds of millions of covered lives across the US, without patient-level data ever leaving the institutions that hold it.[9]

For both decentralized clinical trials and observational studies, the direct-to-patient model is gaining relevance. In RWE especially, the case is straightforward. Pregnancy registries have always needed to reach patients wherever they are, not only at academic medical centers. Oncology and rare disease follow the same logic: patients are often geographically dispersed, often managing complex treatment regimens, and collecting their data from home reduces burden and improves long-term retention.

The strategic frame for post-approval programs

Most sponsors running post-approval programs are operating on both tracks at the same time. A Phase 4 study satisfies a regulatory commitment. RWE studies build the effectiveness story for payers, medical affairs, and long-term label development. The programs serve different stakeholders and answer different questions.

What makes them work together is treating them as exactly what they are: separate programs with separate design requirements. The data strategy, the technology infrastructure, the endpoint selection, and the team running each study all need to reflect the fundamental difference between what a Phase 4 study can prove and what a real-world evidence study can demonstrate.

A Phase 4 study that drifts toward observational methods undermines the clinical trial logic that gives its results regulatory standing. An RWE study forced into a clinical trial framework collects data that no longer reflects how patients actually live. Both programs have real value, but only when they are designed for the questions they are actually built to answer.

Castor supports Phase 4 and real-world evidence study programs with purpose-built data capture designed for the specific requirements of each study type.

See how Castor supports RWE studies

Frequently asked questions

What is the difference between a Phase 4 study and a real-world evidence study?

Phase 4 is a post-approval clinical trial. It involves a prospective protocol, defined visit schedules, and is typically mandated by FDA or EMA as a Post-Marketing Requirement (PMR) to confirm clinical benefit or address a safety signal. A real-world evidence study is observational: it follows patients under standard of care, without introducing a medical intervention as part of the study. Phase 4 measures efficacy and safety under controlled conditions, in a homogeneous, protocol-defined population. RWE studies measure effectiveness and tolerability in real clinical practice, across heterogeneous patient populations that reflect how the drug is actually used.

Can real-world evidence replace a Phase 4 clinical trial?

In most cases, no. Where FDA or EMA has mandated a Phase 4 study as a Post-Marketing Requirement, that commitment specifies a study meeting defined design criteria. RWE can supplement the evidence base, and FDA has accepted real-world data in certain regulatory contexts, particularly for externally controlled trials in rare disease or small populations. A confirmatory Phase 4 study required under accelerated approval cannot be replaced by an observational study.

What are the main types of real-world evidence studies?

The main types include Post-Approval Safety Studies (PASS), which track long-term safety in broader patient populations; Post-Authorization Effectiveness Studies (PAES), which characterize real-world effectiveness after approval and are increasingly required by EMA; natural history studies, which document disease progression without intervention and are particularly valuable in rare disease; Health Economics and Outcomes Research (HEOR) studies, which examine costs, resource utilization, and quality-of-life outcomes for payer and market access purposes; disease and drug registries; retrospective chart review studies; and studies using External Control Arms, where real-world patient data serves as the comparator group in lieu of a concurrent randomized control arm.

What is the difference between an External Control Arm and a Synthetic Control Arm?

An External Control Arm (ECA) uses patient data from outside the study as the comparator group, drawing on electronic health records, registries, or prior clinical studies. A Synthetic Control Arm takes this concept further: it uses advanced statistical methods to construct a comparator group from real-world patient-level data, creating what is sometimes described as a digital twin of the treated population. The comparator is statistically derived rather than drawn directly from a real patient cohort. Both approaches are subject to significant FDA methodological scrutiny and are generally appropriate only in rare disease or small patient populations where randomization is not feasible. FDA’s 2023 draft guidance on externally controlled trials addresses the standards both require.

References

- U.S. Food and Drug Administration. Postmarketing Studies and Clinical Trials. FDCA Section 505(o)(3). Consolidated Appropriations Act of 2023, Section 3210, which expanded FDA authority to initiate expedited withdrawal proceedings for accelerated approval products that fail to verify clinical benefit in confirmatory post-marketing studies.

- International Council for Harmonisation of Technical Requirements for Pharmaceuticals for Human Use (ICH). ICH E6(R3) Guideline for Good Clinical Practice. 2025. Defines clinical trial and establishes the distinction between interventional and observational research.

- U.S. Food and Drug Administration. Framework for FDA’s Real-World Evidence Program. December 2018. FDA Center for Drug Evaluation and Research. Addresses the distinction between efficacy measured in controlled trial settings and effectiveness measured through real-world data.

- European Medicines Agency. Post-Authorisation Safety Studies (PASS) and Post-Authorisation Efficacy Studies (PAES). EMA Pharmacovigilance and Regulatory Science framework. Available at ema.europa.eu.

- U.S. Food and Drug Administration. Rare Diseases: Natural History Studies for Drug Development. FDA Draft Guidance, March 2019. FDA Center for Drug Evaluation and Research / Center for Biologics Evaluation and Research / Center for Devices and Radiological Health.

- U.S. Food and Drug Administration. Patient-Focused Drug Development: Incorporating Clinical Outcome Assessments into Endpoints for Regulatory Decision-Making. FDA Guidance for Industry, 2022. CDER/CBER/CDRH.

- U.S. Food and Drug Administration. Considerations for the Design and Conduct of Externally Controlled Trials for Drug and Biological Products. FDA Draft Guidance, February 2023. CDER/CBER.

- U.S. Food and Drug Administration. Use of Electronic Health Records in Clinical Investigations. FDA Draft Guidance, 2023. CDER.

- U.S. Food and Drug Administration. FDA’s Sentinel System. FDA.gov. The full Sentinel System has operated as an active surveillance network since 2016, spanning dozens of data partners covering hundreds of millions of covered lives in the US.